ConicIT Summary

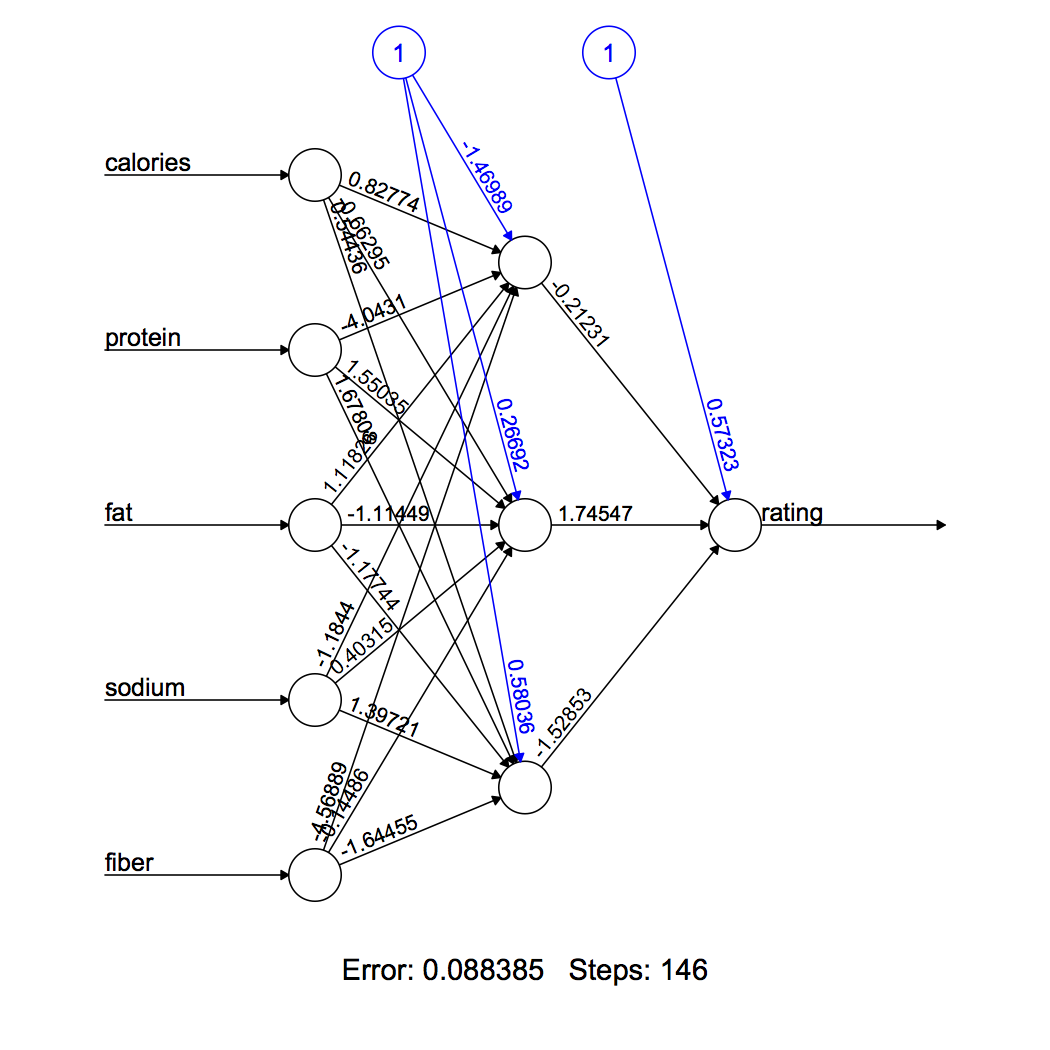

Take your existing performance monitoring environment to the next level. ConicIT, a software solution, reads thousands of performance and stability metrics per minute from your performance monitors such as TMON, Omegamon, Mainview, Sysview and others. ConicIT processes and analyzes these metrics with machine learning technology and automatically generates alerts about problems in which even seasoned, professional performance staff may not notice if they look at the same data.

Beyond the automatic analytics and alerts, ConicIT provides an efficient and friendly web-interface which allows you to browse through relevant performance data, in an aggregated way, including watching values and graphs from the very moment a problem occurs. With ConicIT in place, you won’t need to tediously jump between many different monitors or screens. ConicIT aggregates the data from different sources into single view, so you can watch the data easily receiving either high-level or low-level insights into your application performance.

ConicIT also creates important calculated variables. Examples include ratios, summaries, and critical information such as taking the cumulating CPU-time of a job or transaction and calculating the real-time CPU consumption of jobs and transactions. Much of this information is missing from all monitors. The real-time CPU consumption is calculated using the rate in which the CPU-Time rises during each minute.

One of the major advantages of ConicIT is the dynamic alerts which are based on machine learning and statistical algorithms. Traditional monitors offer simple static-alerts based on thresholds. But static alerts are always coming too late and most of them are false-alerts. ConicIT solves this problem with its advanced algorithms. ConicIT automatically studies the typical behavior of each metric every day of the week and every hour of the day. So ConicIT knows (and shows you) the expected range for each performance metric. ConicIT also learns how stable each variable is and how often and how long it may be out of its normal range. Based on this analysis, ConicIT recognizes when there is an anomaly in the system in one or more metrics. In such case ConicIT will send you an alert with information and graphs about the problem. These proactive alerts come much earlier and more accurately than any static-alert type performance system. ConicIT gives you time to solve problems before they affect your end-users, clients and customers.

The combination of early proactive-alerts when the problem started, along with supportive information and graphs, allows you and your team to quickly pinpoint where the problem started and which team should work on resolving it. Thus, ConicIT reduces the required war-rooms for fixing problems and reduces the mean time for repairing problems.

|

| Figure 1: 30 hours graph |

|

| Figure 2: It takes a single click (on the left menu) to switch and view any type of information from any point in time |